An Immersive System with Multi-modal Human-computer Interaction

Abstract

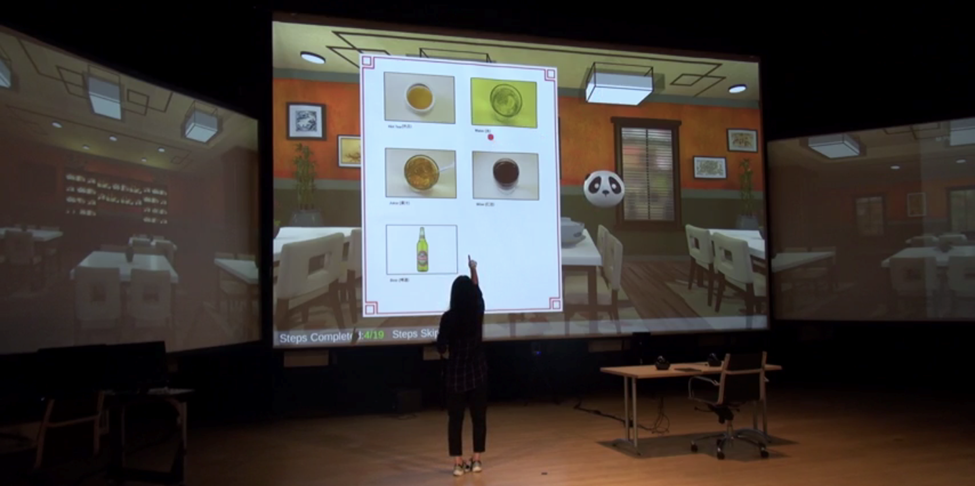

We introduce an immersive system prototype that integrates face, gesture and speech recognition techniques to support multi-modal human-computer interaction capability. Embedded in an indoor room setting, a multi-camera system is developed to monitor the user facial behavior, body gesture and spatial location in the room. A server that fuses different sensor inputs in a time-sensitive manner so that our system knows who is doing what at where in real-time. When correlating with speech input, the system can better understand the user intention for interaction purpose. We evaluate the performance of core recognition techniques on both benchmark and self-collected datasets and demonstrate the benefit of the system in various use cases.

Publication

13th IEEE Conference on Automatic Face and Gesture Recognition (FG) (Accepted)

Date

February, 2018